- Summary

- The evaluation results for various AI models are presented in a sortable table, ranking providers such as Llama, Qwen, and others across several metrics including speed, VRAM requirements, and model complexity. High-quality models with 8B parameters are available and highly rated, offering excellent speeds with 22B active MoE structures. Many competitors like Meta, Google, and Alibaba are offering large-scale models with significant popularity scores. While some models appear underperforming, the data indicates strong demand for efficient architecture choices in the current ecosystem.

- Title

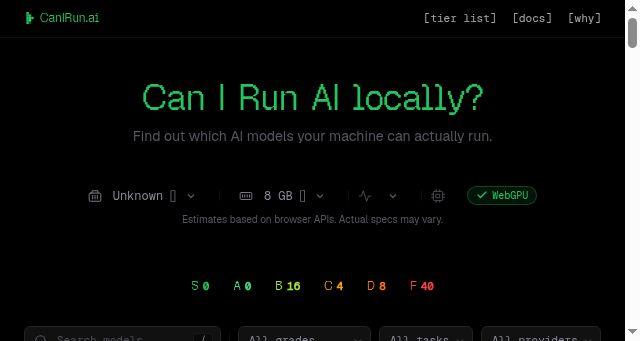

- CanIRun.ai — Can your machine run AI models?

- Description

- Detect your hardware and find out which AI models you can run locally. GPU, CPU, and RAM analysis in your browser.

- Keywords

- architecture, memory, apache, chat, gemma, reasoning, active, year, model, code, llama, vision, community, google, open, mistral, edge

- NS Lookup

- A 172.67.201.155, A 104.21.44.173

- Dates

-

Created 2026-03-14Updated 2026-04-06Summarized 2026-04-07

Query time: 3467 ms